.png) Why Your .NET API Is Slow: 7 Proven Fixes for Production Systems

Why Your .NET API Is Slow: 7 Proven Fixes for Production Systems

Introduction

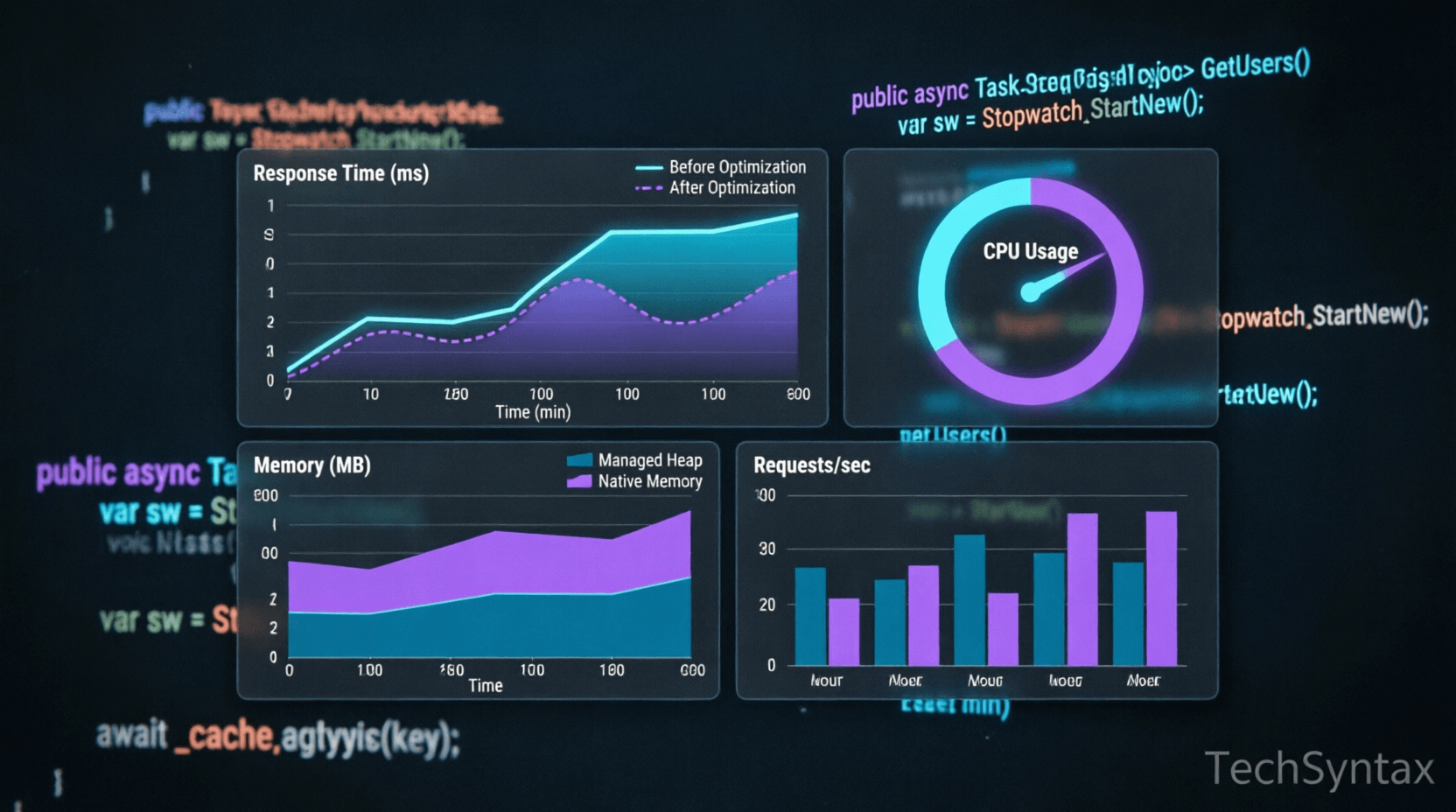

Your .NET API responds in 800ms when it should handle requests in 50ms. Users complain about latency. Your monitoring dashboards show spiking CPU and memory. You've added more servers, but the problem persists. This is the reality many backend engineers face when .NET API performance degrades under production load.

Performance bottlenecks in ASP.NET Core applications rarely have a single cause. They emerge from a combination of inefficient database queries, improper async/await usage, excessive allocations, blocking I/O operations, and misconfigured infrastructure. Without systematic profiling and targeted optimization, you're guessing—which wastes engineering time and frustrates users.

This article dives deep into the internal mechanics of .NET request processing, identifies the seven most common performance killers in production APIs, and provides battle-tested fixes with real code examples. You'll learn how to diagnose bottlenecks using profiling tools, optimize async patterns, implement strategic caching, and tune database access patterns for high-throughput systems.

Quick Overview

Before we dive into technical details, here's what typically causes slow .NET APIs:

- Synchronous I/O operations blocking thread pool threads

- N+1 database queries from lazy loading or inefficient ORM usage

- Missing or misconfigured caching layers

- Excessive object allocations triggering frequent garbage collection

- Improper async/await patterns causing thread starvation

- Unoptimized Entity Framework queries loading unnecessary data

- Missing connection pooling or exhausted database connections

Each of these issues compounds under load, creating cascading failures that degrade user experience and increase infrastructure costs.

What Is .NET API Performance Optimization?

.NET API performance optimization is the systematic process of identifying, measuring, and eliminating bottlenecks in ASP.NET Core applications to achieve target response times, throughput, and resource utilization. It's not about premature micro-optimizations—it's about understanding your application's critical path and removing obstacles that prevent it from scaling.

Performance engineering in .NET involves multiple layers: runtime configuration, application code patterns, database access strategies, infrastructure setup, and monitoring. The goal is to build systems that maintain sub-100ms response times under expected load while efficiently utilizing CPU, memory, and I/O resources.

Modern .NET applications face unique challenges: distributed tracing across microservices, handling thousands of concurrent connections, processing large payloads, and integrating with external APIs. Each layer introduces potential latency that accumulates into poor user experience.

How .NET API Request Processing Works Internally

.png)

Understanding the internal request lifecycle is crucial for identifying where performance degrades. When an HTTP request hits your ASP.NET Core API, it traverses several execution stages:

Request Pipeline Execution

The request enters through Kestrel, the cross-platform web server, which parses the HTTP protocol and creates an HttpContext. It then flows through the middleware pipeline—authentication, logging, routing, and endpoint execution. Each middleware component can add latency, especially if it performs blocking operations or excessive allocations.

Dependency Injection and Controller Activation

The DI container resolves controller dependencies, instantiating services with their configured lifetimes (Transient, Scoped, Singleton). Scoped services are created per request, which means frequent allocations. If your services have heavy constructors or resolve deep dependency graphs, this adds measurable overhead.

Async State Machine Execution

When you use async/await, the C# compiler generates a state machine that manages continuation execution. Improper async patterns—like async void, blocking on async code with .Result, or excessive async overhead for CPU-bound work—can degrade performance instead of improving it. Understanding when async provides benefits (I/O-bound operations) versus when it adds overhead (trivial synchronous operations) is critical.

Database Access and ORM Overhead

Entity Framework Core translates LINQ expressions into SQL, tracks entity changes, and materializes results into objects. This abstraction layer adds overhead: expression tree compilation, query translation, change tracking, and object materialization. Without proper optimization—like AsNoTracking(), selective projections, and compiled queries—EF Core can generate inefficient SQL or load far more data than needed.

Thread Pool and I/O Completion

.NET uses a thread pool to handle concurrent requests. When async I/O operations complete, continuations execute on thread pool threads. If you block threads synchronously or create too many tasks, you exhaust the thread pool, causing thread starvation where requests queue waiting for available threads. This manifests as suddenly degraded response times under load.

[Insert Internal Mechanism Diagram here showing the request lifecycle flow]

Architecture and System Design Considerations

.png)

Performance isn't just about code—it's about architecture. Production .NET APIs require thoughtful design decisions at multiple levels:

Layered Architecture with Caching Strategy

A typical high-performance API separates concerns into distinct layers: Controllers handle HTTP concerns, Services contain business logic, and Repositories manage data access. Between these layers, strategic caching reduces redundant work:

- Response Caching: Cache entire HTTP responses for idempotent GET requests using distributed cache (Redis)

- Data Caching: Cache frequently accessed reference data at the service layer

- Query Result Caching: Cache expensive database query results with appropriate invalidation strategies

Database Connection Management

Database connections are expensive resources. ADO.NET maintains connection pools, but misconfiguration causes problems: too small a pool causes connection starvation; too large wastes resources. Use connection pooling with appropriate Max Pool Size settings, and always dispose connections promptly using using statements or dependency injection with proper lifetimes.

Horizontal Scaling Considerations

When scaling horizontally across multiple instances, stateful operations become bottlenecks. Session state, in-memory caches, and singleton services with state must be externalized to distributed systems like Redis. Stateless services scale more predictably.

[Insert Architecture Diagram here showing layered architecture with caching and database]

Implementation Guide: 7 Proven Fixes

Fix #1: Eliminate Synchronous I/O Operations

Blocking I/O is the most common performance killer in .NET APIs. When you call synchronous database methods, file I/O, or external HTTP requests, you block thread pool threads that could handle other requests.

// ❌ BAD: Synchronous blocking

[HttpGet("{id}")]

public IActionResult GetUser(int id)

{

var user = _dbContext.Users.Find(id); // Blocks thread

var orders = _dbContext.Orders

.Where(o => o.UserId == id)

.ToList(); // Another blocking call

return Ok(new { user, orders });

}

// ✅ GOOD: Asynchronous I/O

[HttpGet("{id}")]

public async Task<ActionResult<UserDto>> GetUser(int id)

{

var user = await _dbContext.Users.FindAsync(id); // Non-blocking

var orders = await _dbContext.Orders

.Where(o => o.UserId == id)

.ToListAsync(); // Non-blocking

return Ok(new { user, orders });

}Async I/O releases threads back to the pool while waiting for I/O completion, allowing the server to handle more concurrent requests with the same resources. For deeper understanding, see our guide on C# Async/Await: Performance & Best Practices.

Fix #2: Optimize Entity Framework Queries

Inefficient database queries are the second most common bottleneck. Common issues include loading unnecessary columns, N+1 queries, and missing indexes.

// ❌ BAD: Loading entire entities when only few fields needed

var users = await _dbContext.Users.ToListAsync();

var result = users.Select(u => new { u.Id, u.Name, u.Email });

// ❌ BAD: N+1 query problem

var orders = await _dbContext.Orders.ToListAsync();

foreach (var order in orders)

{

var customer = await _dbContext.Customers.FindAsync(order.CustomerId); // Query per iteration!

}

// ✅ GOOD: Projection to DTO reduces data transfer

var users = await _dbContext.Users

.Select(u => new UserDto

{

Id = u.Id,

Name = u.Name,

Email = u.Email

})

.ToListAsync();

// ✅ GOOD: Eager loading with Include

var orders = await _dbContext.Orders

.Include(o => o.Customer)

.ToListAsync();

// ✅ BEST: AsNoTracking for read-only queries

var users = await _dbContext.Users

.AsNoTracking()

.Where(u => u.IsActive)

.Select(u => new UserDto { Id = u.Id, Name = u.Name })

.ToListAsync();Use SQL Server Profiler or EF Core logging to identify slow queries. Add appropriate database indexes on frequently filtered columns.

Fix #3: Implement Strategic Caching

Caching reduces redundant work by storing results of expensive operations. The key is choosing the right cache strategy and invalidation policy.

// ✅ Distributed caching with Redis

public class UserService

{

private readonly IDistributedCache _cache;

private readonly IDbContextFactory<AppDbContext> _dbContextFactory;

private static readonly TimeSpan CacheDuration = TimeSpan.FromMinutes(10);

public async Task<UserDto> GetUserById(int id)

{

string cacheKey = $"user:{id}";

// Try cache first

var cachedUser = await _cache.GetStringAsync(cacheKey);

if (cachedUser != null)

{

return JsonSerializer.Deserialize<UserDto>(cachedUser);

}

// Cache miss - query database

await using var context = await _dbContextFactory.CreateDbContextAsync();

var user = await context.Users

.AsNoTracking()

.Where(u => u.Id == id)

.Select(u => new UserDto { Id = u.Id, Name = u.Name })

.FirstOrDefaultAsync();

if (user != null)

{

// Populate cache

var serialized = JsonSerializer.Serialize(user);

await _cache.SetStringAsync(cacheKey, serialized, new DistributedCacheEntryOptions

{

AbsoluteExpirationRelativeToNow = CacheDuration

});

}

return user;

}

}For more caching strategies, check out our article on Microservices vs Monolithic Architecture which covers cross-cutting concerns like caching.

Fix #4: Reduce Allocations and GC Pressure

Excessive object allocations trigger frequent garbage collection, causing latency spikes. Use object pooling, avoid unnecessary allocations in hot paths, and prefer struct over class for small, short-lived objects.

// ❌ BAD: Allocating strings in loops

public string ConcatenateItems(IEnumerable<string> items)

{

string result = "";

foreach (var item in items)

{

result += item + ","; // Creates new string each iteration

}

return result;

}

// ✅ GOOD: Use StringBuilder

public string ConcatenateItems(IEnumerable<string> items)

{

var sb = new StringBuilder();

foreach (var item in items)

{

sb.Append(item).Append(',');

}

return sb.ToString();

}

// ✅ BETTER: Use string.Join for simple cases

public string ConcatenateItems(IEnumerable<string> items)

{

return string.Join(",", items);

}

// ✅ Object pooling for expensive objects

private readonly ObjectPool<JsonSerializerOptions> _jsonOptionsPool;

public class PooledJsonService

{

public string Serialize(object data)

{

var options = _jsonOptionsPool.Get();

try

{

return JsonSerializer.Serialize(data, options);

}

finally

{

_jsonOptionsPool.Return(options);

}

}

}For deeper insights, read our guide on .NET Memory Management: Value vs Reference Types.

Fix #5: Use Response Compression

Large JSON responses consume bandwidth and increase latency. Enable response compression to reduce payload size.

// Program.cs

builder.Services.AddResponseCompression(options =>

{

options.EnableForHttps = true;

options.Providers.Add<BrotliCompressionProvider>();

options.Providers.Add<GzipCompressionProvider>();

options.MimeTypes = ResponseCompressionDefaults.MimeTypes.Concat(

new[] { "application/json" });

});

app.UseResponseCompression();Fix #6: Optimize Dependency Injection Lifetimes

Incorrect service lifetimes cause memory leaks or excessive allocations. Use Singleton for stateless services, Scoped for per-request services, and avoid capturing scoped services in singletons.

// ✅ Correct lifetime usage

builder.Services.AddSingleton<ICacheService, RedisCacheService>(); // Stateless, thread-safe

builder.Services.AddScoped<IUserService, UserService>(); // Per-request, uses DbContext

builder.Services.AddTransient<ITokenGenerator, TokenGenerator>(); // Lightweight, stateless

// ❌ BAD: Capturing scoped service in singleton

public class BadService

{

private readonly DbContext _context; // Scoped service captured in singleton!

public BadService(DbContext context) // This will cause issues

{

_context = context;

}

}Fix #7: Profile and Monitor Continuously

Use profiling tools to identify bottlenecks before optimizing:

// Use MiniProfiler in development

builder.Services.AddMiniProfiler(options =>

{

options.RouteBasePath = "/profiler";

});

// Use Application Insights for production monitoring

builder.Services.AddApplicationInsightsTelemetry();

// Custom metrics with Meter API

private static readonly Meter Meter = new("MyApp", "1.0");

private static readonly Histogram<double> RequestDuration =

Meter.CreateHistogram<double>("request.duration", "ms");

public async Task<IActionResult> GetData()

{

var stopwatch = Stopwatch.StartNew();

try

{

// Your code here

return Ok(data);

}

finally

{

stopwatch.Stop();

RequestDuration.Record(stopwatch.ElapsedMilliseconds);

}

}Performance Considerations

.png)

Each optimization technique has different performance characteristics. Here's a comparison of typical improvements:

| Optimization Technique | Response Time Impact | Throughput Impact | Memory Impact | Complexity |

|---|---|---|---|---|

| Async I/O | 30-50% reduction | 2-5x improvement | Lower | Medium |

| Query Optimization | 50-90% reduction | 3-10x improvement | Lower | Medium |

| Distributed Caching | 70-95% reduction | 5-20x improvement | Higher (cache memory) | High |

| Reduced Allocations | 10-30% reduction | 1.5-3x improvement | Much Lower | Medium |

| Response Compression | 40-70% size reduction | Minimal | Slightly Higher | Low |

| Connection Pooling | 20-40% reduction | 2-4x improvement | Lower | Low |

[Insert Performance Comparison Chart here showing the 7 techniques side-by-side]

The actual impact depends on your specific workload, but database optimization and caching typically provide the highest ROI.

Security Considerations

Performance optimizations must not compromise security:

- Cache Security: Never cache sensitive data (PII, tokens) without encryption. Validate cache keys to prevent cache poisoning attacks.

- Query Parameters: Always parameterize database queries to prevent SQL injection, even when optimizing.

- Rate Limiting: Implement rate limiting to prevent abuse, especially on cached endpoints that might expose data.

- Compression: Be aware of CRIME and BREACH attacks when using compression with sensitive data.

- Error Handling: Don't expose stack traces or internal errors in production, even when debugging performance issues.

Common Mistakes Developers Make

1. Using async/await Everywhere Without Understanding

Developers often add async/await to CPU-bound operations where it adds overhead without benefit. Async provides value for I/O-bound operations (database, HTTP, file I/O), not CPU calculations.

2. Ignoring Database Indexes

No amount of code optimization compensates for missing database indexes on frequently queried columns. Always analyze query execution plans.

3. Caching Everything Without Invalidation Strategy

Caching without proper invalidation leads to stale data and hard-to-debug issues. Define clear TTL policies and cache invalidation events.

4. Loading Entire Entities for Simple Operations

Fetching entire database rows when you only need 2-3 fields wastes memory, network bandwidth, and CPU. Use projections (Select) to load only required data.

5. Blocking on Async Code

Calling .Result or .Wait() on async tasks causes deadlocks and thread pool starvation. Always use async all the way up the call stack.

6. Not Configuring Connection Pooling

Creating new database connections for each request is expensive. Rely on ADO.NET's built-in connection pooling with appropriate pool size settings.

7. Optimizing Without Measuring

Premature optimization without profiling leads to wasted effort. Always measure first, optimize the bottleneck, then measure again to verify improvement.

Best Practices

- Profile before optimizing—use tools like MiniProfiler, Application Insights, or dotTrace

- Set performance budgets and SLOs (Service Level Objectives) for critical endpoints

- Implement distributed tracing to identify cross-service latency

- Use connection pooling with appropriate Max Pool Size (default is 100)

- Enable HTTP/2 for better multiplexing and reduced latency

- Configure Kestrel with appropriate thread pool settings for your workload

- Use AsNoTracking() for read-only EF Core queries

- Implement circuit breakers for external service calls to prevent cascading failures

- Monitor GC pressure and allocation rates in production

- Load test regularly to identify performance regressions before deployment

Real-World Production Use Cases

E-Commerce API Scaling

An e-commerce platform handling 10,000 requests/second reduced average response time from 450ms to 85ms by implementing Redis caching for product catalogs, optimizing database queries with proper indexing, and using async I/O throughout the stack.

Financial Services Microservices

A financial services company reduced latency spikes from 2 seconds to 150ms by eliminating blocking I/O in their transaction processing API, implementing connection pooling, and using compiled EF Core queries for frequent operations.

SaaS Multi-Tenant Application

A SaaS platform serving 50,000 concurrent users improved throughput 5x by implementing response caching, optimizing dependency injection lifetimes, and reducing allocations in hot paths using object pooling.

Developer Tips

Tip 1: Use

dotnet-countersanddotnet-dumpfor production diagnostics without stopping your application. These tools provide real-time metrics and memory dumps for analysis.

Tip 2: Enable EF Core query logging in development to catch N+1 queries early:

optionsBuilder.LogTo(Console.WriteLine, LogLevel.Information).

Tip 3: Set up automated performance regression tests in your CI/CD pipeline. Use tools like BenchmarkDotNet to track performance trends.

Tip 4: Use

ValueTask<T>instead ofTask<T>for high-frequency async operations that often complete synchronously to reduce allocations.

Tip 5: Configure your load balancer with health checks that verify actual database connectivity, not just HTTP 200 responses.

FAQ

Why is my .NET API slow under load?

Common causes include synchronous I/O blocking threads, inefficient database queries, missing caching, excessive garbage collection, and thread pool starvation. Profile your application to identify the specific bottleneck before optimizing.

How can I improve ASP.NET Core API response time?

Implement async/await for I/O operations, optimize Entity Framework queries with AsNoTracking() and projections, add distributed caching with Redis, enable response compression, and reduce object allocations in hot paths.

What is the best way to profile .NET API performance?

Use MiniProfiler for development, Application Insights or OpenTelemetry for production monitoring, and dotnet-counters for real-time metrics. For deep analysis, use dotMemory or dotTrace to identify memory leaks and CPU hotspots.

Should I use async/await for all methods in .NET API?

No. Use async/await for I/O-bound operations (database, HTTP calls, file I/O). For CPU-bound operations, async adds overhead without benefit. Always use async for database queries and external API calls.

How does caching improve .NET API performance?

Caching stores results of expensive operations (database queries, computations) in fast memory (Redis, IMemoryCache). Subsequent requests retrieve cached data instead of re-executing expensive operations, reducing response time by 70-95% for cacheable endpoints.

Recommended Related Articles

- C# Async/Await: Performance & Best Practices - Deep dive into async patterns and pitfalls

- .NET Memory Management Explained for Developers - Understanding stack, heap, and GC

- 10 .NET API & Security Interview Questions - RESTful API design and security patterns

- The Ultimate Guide to .NET Interview Questions - System design and architecture

- C# String Performance Showdown - String manipulation optimization techniques

Developer Interview Questions

- Explain the difference between Task and ValueTask. When would you use ValueTask in a high-performance API?

- How does async/await work under the hood in C#? Describe the state machine the compiler generates.

- What is the N+1 query problem in Entity Framework, and how do you prevent it?

- Describe how connection pooling works in ADO.NET. What happens when the pool is exhausted?

- How would you diagnose and fix thread pool starvation in a production .NET API?

Conclusion

Optimizing .NET API performance requires systematic profiling, understanding of runtime internals, and targeted fixes for identified bottlenecks. The seven techniques covered—async I/O, query optimization, strategic caching, reduced allocations, response compression, proper DI lifetimes, and continuous monitoring—address the most common performance issues in production APIs.

Start by measuring your current performance baseline using profiling tools. Identify your slowest endpoints and most frequent database queries. Apply these optimizations iteratively, measuring impact after each change. Remember that premature optimization without data leads to wasted effort—always profile first.

High-performance .NET APIs don't happen by accident. They result from deliberate architectural decisions, efficient code patterns, and continuous monitoring in production. By implementing these proven techniques, you'll build APIs that scale efficiently, respond quickly, and provide excellent user experience even under heavy load.

Be the first to leave a comment!