Memory management is one of the most critical performance foundations in modern backend systems. Whether you are building high-traffic APIs, microservices, or distributed systems, understanding how memory works inside the .NET runtime can significantly improve performance, stability, and scalability.

Many production performance issues originate from poor memory usage patterns rather than slow algorithms. Excessive allocations, improper object lifetimes, and inefficient data structures often cause latency spikes and increased garbage collection pressure.

For backend engineers working with ASP.NET Core and C#, mastering memory management is essential for designing high-performance services.

This guide explains how .NET manages memory internally, how the garbage collector works, and how developers can optimize applications for production workloads.

Quick Overview

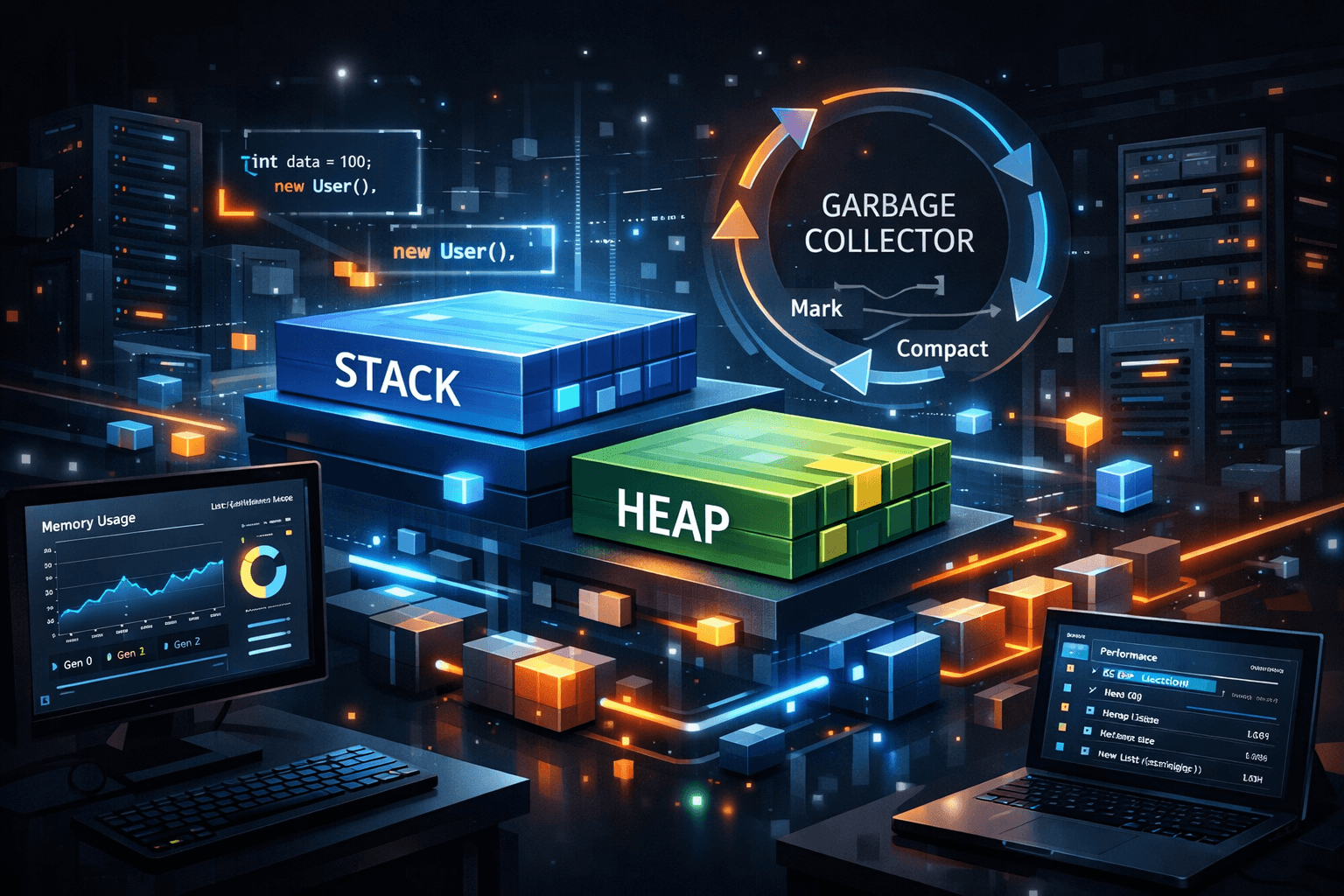

.NET automatically manages memory through a managed runtime and a generational garbage collector.

- The Stack stores value types and method calls.

- The Heap stores reference types and dynamically allocated objects.

- The Garbage Collector (GC) reclaims unused memory automatically.

- Memory is organized into generations to optimize collection performance.

Understanding these components allows developers to avoid common performance issues such as excessive allocations and memory leaks.

1. Stack vs Heap in .NET

The .NET runtime divides memory into two major regions: the stack and the heap.

Stack Memory

The stack is a fast, structured memory region used for:

- Local variables

- Method calls

- Value types

- Execution frames

Stack allocations are extremely fast because memory is simply pushed and popped as methods execute.

Heap Memory

The heap stores reference types and dynamically allocated objects.

- Classes

- Arrays

- Strings

- Objects created with

new

Unlike the stack, heap memory must be reclaimed by the garbage collector.

Example: Value Type vs Reference Type

int number = 10; // stored on stack

class User

{

public string Name { get; set; }

}

User user = new User(); // stored on heap

Value types are generally faster because they avoid heap allocation and garbage collection.

Developer Tip: Frequent heap allocations increase GC pressure and can degrade API performance.

A deeper performance comparison can be seen in C# String Performance Comparison, which shows how different allocation patterns affect runtime behavior.

2. How the .NET Garbage Collector Works

The garbage collector automatically frees memory used by objects that are no longer referenced by the application.

The GC operates using a generational model designed for high performance.

GC Generations

| Generation | Purpose | Typical Objects |

|---|---|---|

| Gen 0 | Short-lived objects | Temporary allocations |

| Gen 1 | Intermediate lifetime | Objects surviving Gen 0 |

| Gen 2 | Long-lived objects | Caches, static objects |

Most objects are allocated in Generation 0. If they survive garbage collection cycles, they move to higher generations.

GC Lifecycle

The garbage collector performs the following phases:

- Mark reachable objects

- Identify unreachable objects

- Compact memory

- Reclaim unused memory

This process minimizes memory fragmentation while maintaining application performance.

3. Memory Allocation Patterns in C#

Developers often unintentionally create excessive allocations that trigger frequent garbage collection.

Example: String Allocation

string result = "";

for(int i = 0; i < 1000; i++)

{

result += i;

}

This creates multiple intermediate strings.

Optimized Version

var builder = new StringBuilder();

for(int i = 0; i < 1000; i++)

{

builder.Append(i);

}

var result = builder.ToString();

This pattern reduces allocations and improves performance.

For more performance-related mistakes see 50 C# Performance Mistakes That Slow Down APIs.

4. Large Object Heap (LOH)

Objects larger than 85 KB are allocated in the Large Object Heap.

Examples include:

- Large arrays

- Big JSON payloads

- Large byte buffers

LOH allocations are expensive because they are not compacted as frequently as other memory regions.

Example

byte[] buffer = new byte[100000]; // allocated on Large Object Heap

Performance Considerations

- Avoid repeatedly allocating large objects

- Reuse buffers when possible

- Use object pools

Developer Tip: Large Object Heap fragmentation is a common cause of memory pressure in high-throughput APIs.

5. Memory Leaks in .NET Applications

Even with automatic garbage collection, memory leaks can still occur.

Common Causes

- Static references

- Event handler leaks

- Unreleased unmanaged resources

- Large in-memory caches

Example: Event Handler Leak

publisher.SomeEvent += HandleEvent;

If the subscriber is never unsubscribed, the object remains referenced and cannot be collected.

Fix

publisher.SomeEvent -= HandleEvent;

6. Debugging Memory Issues in Production

Memory problems often appear only under production workloads.

Common tools used by backend engineers include:

- dotnet-counters

- dotnet-trace

- Visual Studio Diagnostic Tools

- Application Insights

Example: Monitoring GC

dotnet-counters monitor System.Runtime

This command shows real-time memory metrics.

Performance Notes

- Minimize unnecessary allocations

- Avoid large object allocations in hot paths

- Use object pooling for reusable resources

- Optimize high-frequency API endpoints

Real-World Engineering Scenario

A backend payment API experienced latency spikes during peak traffic.

The root causes were:

- Large object allocations for JSON serialization

- Temporary collections created in request pipelines

- Frequent Gen 2 garbage collection

After optimizing allocations and introducing object pooling, latency stabilized and throughput improved.

Common Mistakes

- Creating objects inside tight loops

- Ignoring object lifetime

- Allocating large arrays repeatedly

- Overusing LINQ allocations

- Holding unnecessary references

Recommended Related Articles

- Understanding ASP.NET Core Request Pipeline Performance

- High-Performance C# Practices

- LINQ Performance Optimization Techniques

- How Garbage Collection Works Internally

- Building High-Throughput APIs in .NET

Developer Interview Questions

- What is the difference between stack and heap in .NET?

- How does the .NET garbage collector work?

- What are GC generations?

- What causes memory leaks in managed applications?

- How can you diagnose memory issues in production?

Frequently Asked Questions

What is memory management in .NET?

It refers to how the .NET runtime allocates, tracks, and frees memory automatically using a managed heap and garbage collector.

What triggers garbage collection?

Garbage collection occurs when memory pressure increases or when the runtime determines it is beneficial to reclaim unused objects.

What is the Large Object Heap?

Objects larger than approximately 85 KB are allocated in the Large Object Heap, which has different collection behavior.

Do .NET applications still have memory leaks?

Yes. Memory leaks can occur when objects remain referenced and therefore cannot be garbage collected.

How can developers reduce memory allocations?

Use efficient data structures, reuse objects, avoid unnecessary collections, and profile memory usage.

Conclusion

.NET memory management provides powerful automatic garbage collection, allowing developers to focus on building features rather than manually managing memory.

However, understanding how the runtime allocates memory, how the garbage collector operates, and how object lifetimes affect performance is critical for building scalable systems.

Developers who master these concepts can significantly improve API performance, reduce latency spikes, and design backend services capable of handling high-traffic workloads.

Be the first to leave a comment!